Neural Interactive Machine Learning

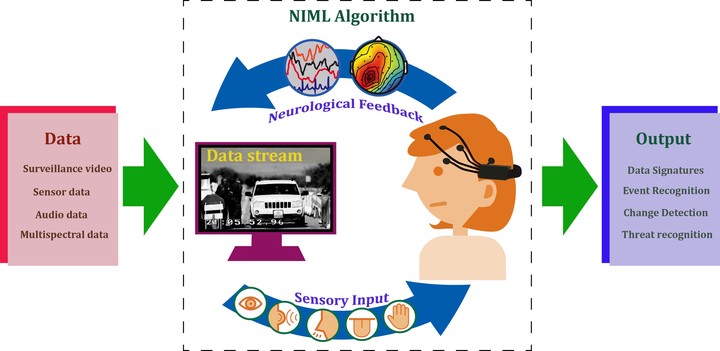

Machine intelligence advances have elevated the potential of computer-based video analytics but machines alone still fall short of human visual recognition capabilities. Humans, however, cannot address the high volumes of data in need of analysis. Despite exponential growth in machine analytics, opportunities for improvement remain (reducing training sets, improving cross-platform compatibility, adapting to changing environments, etc.). This project aims at achieving higher performance thresholds by integrating the strengths of human and machine reasoning.

We are currently building brain-computer interfaces and machine learning approaches to quantify neurological data reflecting fast visual recognition collected with an electroencephalogram (EEG) headset worn by a human participant viewing video data. The quality of psychological engagement are being investigated and the quality of EEG signatures are being evaluated based on reproducibility and accuracy.

The NIML approach is envisaged to significantly enhance data processing with application to HIL processes that could benefit big data problems such as data labeling for medicine, social media, among others.